Thanks to the efforts of major companies such as Nike and numerous startups, a groundswell of health- , fitness- and wellness-oriented consumer wearable technology and real wearable products has recently emerged.

In addition to health and fitness, there are many other wearable tech avenues – augmented reality and related tech for gamers, fashion technology, the toy market, within the enterprise, and in the military, to name the most obvious. Then there is also pure wearable tech - from new types of electronic cloth to new ways to manifest wireless personal and wearable communications technology - such as the use of Zenneck waves that travel over (not through) one's clothes to establish personal area wireless networks amongst different wearable devices.

The most visible wearable tech currently making waves is of course Google (News  - Alert) Glass - augmented reality “eyewear” that Google has managed to generate a lot of press for, even if at this point in time they really don't do much more than what you can already do with your smartphone. But they do have the charm of making you look Geeky-Cyborg.

- Alert) Glass - augmented reality “eyewear” that Google has managed to generate a lot of press for, even if at this point in time they really don't do much more than what you can already do with your smartphone. But they do have the charm of making you look Geeky-Cyborg.

That isn't a recognized fashion term yet, but if it catches on, you heard it here first.

Finally, there is a lot of wearable tech noise suddenly - or finally - being generated for the sake of sensational headlines around products that do not actually exist in the public domain in any form or fashion - Apple's rumored iWatch and a likely Samsung (News - Alert) competitor.

We'll tackle the issue of the iWatch in a separate article.

And then there is wearable tech of an entirely different kind that encompasses professional healthcare. This includes all sorts of sensors, nanotechnology-based implants, and most importantly, new technology that in some cases combines external wearable devices with embedded sensors. For lack of a better word and for convenience we'll refer to these latter devices as bionic devices. The word "prosthesis" is applicable but doesn't really capture what the wearable tech can do it.

The first two such breakthroughs - and they are legitimate breakthroughs - that have caught our eye are, well…bionic eyes. The first - Second Sight's Argus II - has been fairly well-covered. The second - the Alpha IMS system - has only garnered the occasional mention, but both are quite amazing. Finally we'll look at a bionic hand that is able to connect to a person's actual nervous system and allows the patient to actually feel things.

Argus II

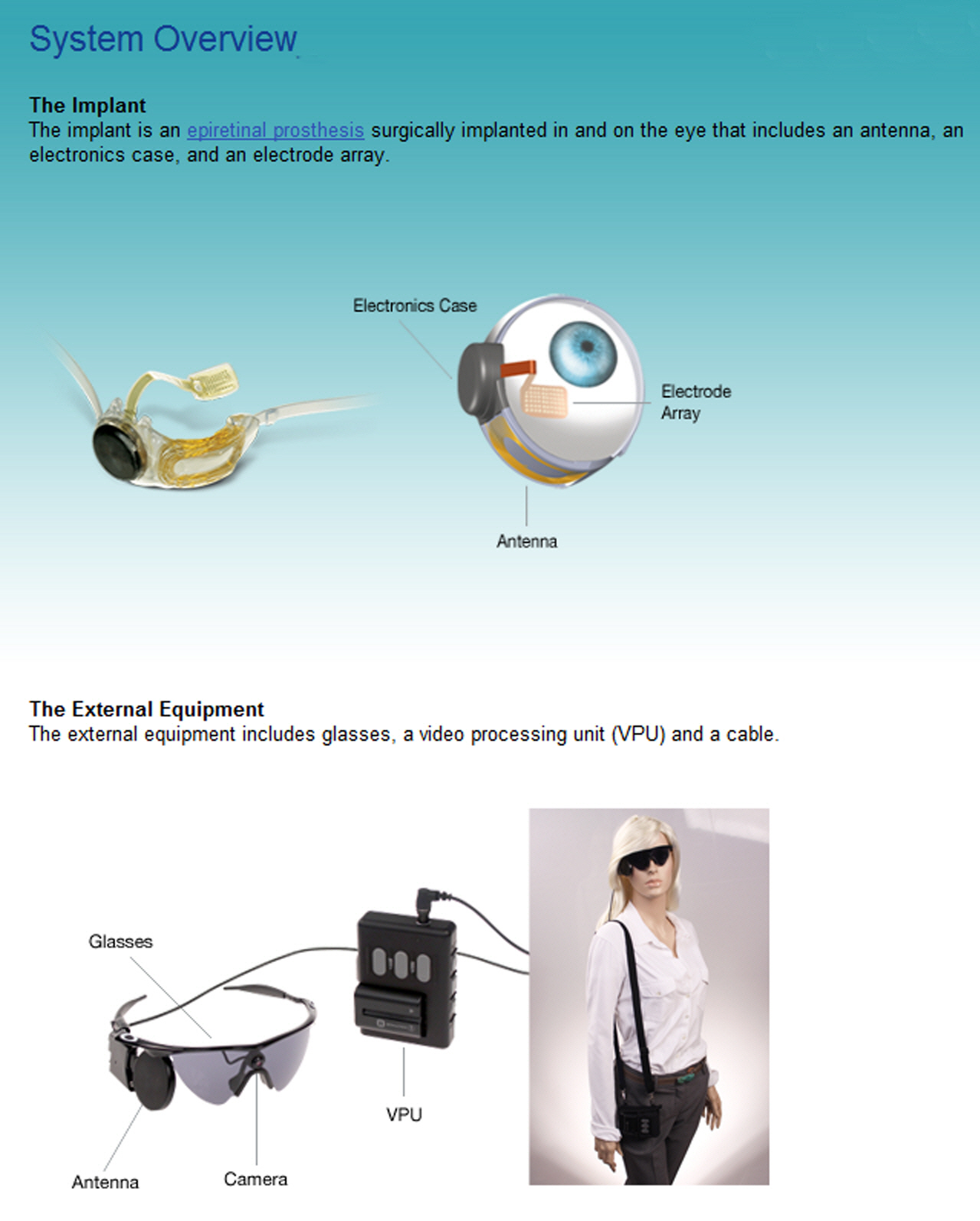

Several weeks ago the Federal Food and Drug Administration granted its approval for Second Sight to bring its new Argus II bionic eye technology to the United States. It had been earlier approved for use in Europe, but coming to the US obviously brings it much closer to home for us. What is it, and what does it do? Before we go on here is an overall system view of it:

As is shown, it is a system that consists of an actual powered eye implant sensor which in turn communicates with an external wearable device that includes a camera and a video processing unit. Medically speaking we can refer to it as a retinal prosthesis though it relies as well on the external wearable tech as well as wireless communications.

The Argus II isn't any sense a "general eye replacement" - it is specifically designed to treat a disease known as Retinitis Pigmentosa RP). It is caused by abnormalities of the photoreceptors (rods and cones) of the retina, and inevitably leads to progressive sight loss. RP can manifest itself early in life though many are afflicted with it in later years. Unfortunately, when it happens in later years it happens quickly and leaves most people to deal with the trauma of sudden loss of vision at an age when it becomes almost impossible to adapt. We will return to this latter issue.

Symptoms include the inability to adapt to light to dark or dark to light shifts, night blindness, and the degeneration of peripheral vision (tunnel vision). It is also possible for a person's central vision to be impaired first, which causes people to at objects from the sides of their eyes. Color vision can also be affected, and in the most severe cases will lead to total loss of vision. It is estimated that as many as 100,000 people are afflicted with RP in the United States.

RP is a retinal disease and as such the optic nerve itself remains intact, so that it remains available to external stimulus. This is critical to the functioning of the Argus II. In case you missed it in your high school science classes or don't remember, the retina converts light rays into electrical impulses that then travel through the optic nerve to the brain, where they are next assembled into images.

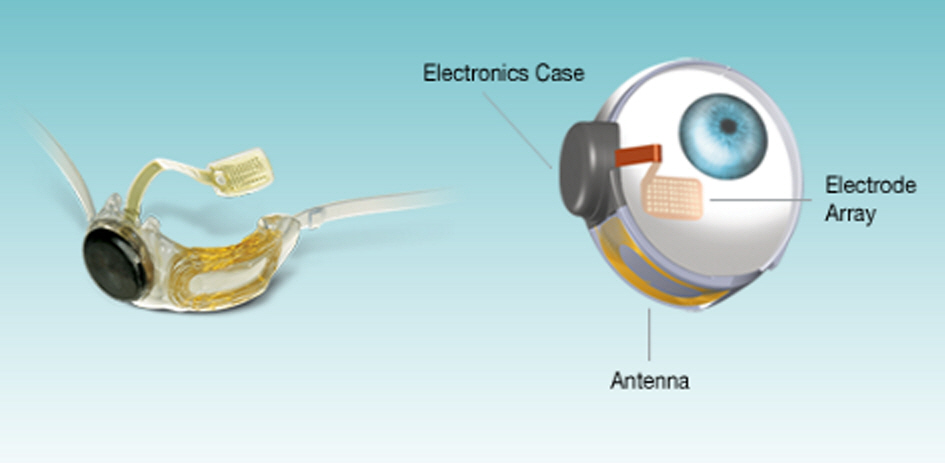

As the close up image below shows, the implant piece of the technology consists of an array of 60 electrodes that communicate directly with the optic nerve by stimulating still active cells. Essentially the electrode array replaces the eye's own damaged photoreceptors. A tiny package of electronics that includes the power source and a wireless antenna complete the implant, as the image shows.

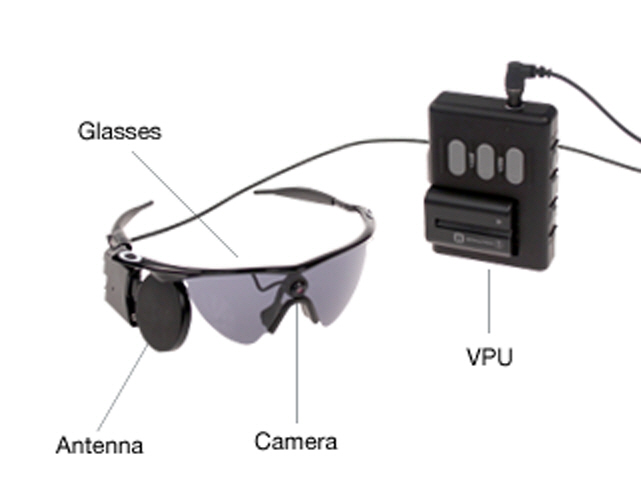

Once the implant is completed, the next step is to be able to transmit external images to it that can then be regenerated to the perfectly functioning optical nerve. That leads us to the wearable tech pieces of the system. These consist of a pair of glasses (no, they don't have to be cool looking shades - they can just as well be a pair of regular glasses) that support a video camera and a wireless transmitter/antenna. The close up image below provides more detail.

Patients do need to turn their heads to aim the video camera. The camera captures whatever is in front of it and sends the video stream to the external video processor, which then translates the video stream into the appropriate data needed by the implanted electrode array to deliver the stimulation necessary for the optic nerve to deliver the signals necessary for the brain to form images. The key here is that the communication between the glasses and the implanted system is wireless. This makes the external system easily removable.

The Argus II will become available in the US later in 2013, but even so we need to keep in mind that we are still in the early days of the technology. Developing the sensor array that is implanted in the eye itself has been a huge technical challenge that has finally been overcome. Second Sight, which was founded in 1998, created an early system as far back as 2002 that required a team of four surgeons to perform an eight hour procedure, and of course wireless technology wasn't available then. The implant surgery for the new technology requires only two hours.

The current technology doesn't suddenly restore vision - that is still a long way off. What the Argus II is able to do is to allow patients to be able to see shapes and forms and to detect large letters. The images, shapes, forms and letters are seen in black and white. The major benefit here is that enough sight is restored to the patient to overcome the traumatic loss of vision we noted earlier, and gives patients a huge increase in the ability to adapt to the disease and to retain the ability to capably continue to function.

It is a huge and progressive step - the eye, due to its overall anatomy and complexity has proven to be a difficult challenge to overcome - Second Sight and others have been working on the problem for two decades. Next steps in the technology will include refining the shapes, images and forms that a patient can discern, and adding color. We anticipate that availability in the United States will drive new investments and an increase in the rate of progress.

As is currently approved by the FDA perhaps as many as 15,000 people in the US will be eligible for Argus II for RP treatment. Only seven hospitals - based in Pennsylvania, Texas, California, New York, and Maryland - will be equipped to provide the surgery as of today, but that should change quickly. Exclusive of surgery costs, the technology itself has a current cost of $150,000. Whether or not insurance will cover the costs remains an open question, but we certainly hope that will be the case.

It is worth noting that given the way the technology works it becomes entirely possible, as technology improves, to actually bypass the eye and optical nerve completely and to send the video signals directly to an electrode array directly implanted in the visual cortex of the brain. It sounds like science fiction - the only difference is that it is real.

Alpha IMS

The Alpha IMS system has been developed in Germany by researchers at the University of Tübingen. The technology is at once more sophisticated than the Argus II, but not nearly as far down the road to reality as an actual product. But it does suggest potential directions for where the Argus II is likely to go. To date, there has been a successful nine patient test project for the Alpha IMS, and additional testing is now underway in Hungary, the U.K. and in Hong Kong (certainly an interestingly diverse collection of locations).

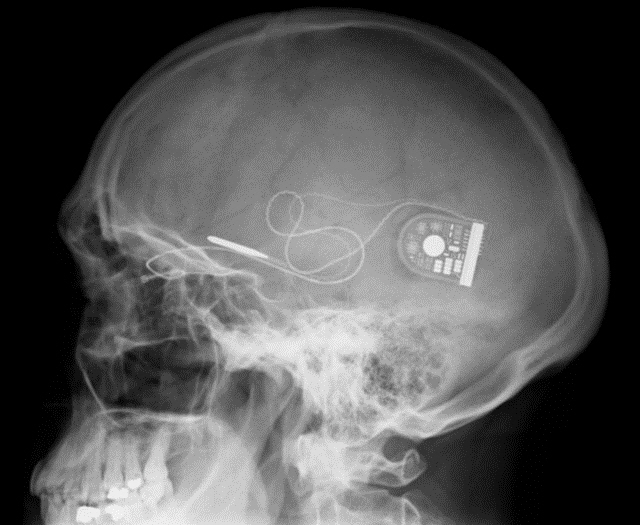

As the image below shows, the Alpha IMS is entirely embedded within the patient, though there is also an external device that will be located behind the ear that will allow a patient to adjust the brightness level for what they see.

Just as interesting, the entire embedded device is powered wirelessly through a battery that can simply sit in one's pocket.

Unlike the Argus II, which relies on an external video camera, the Alpha IMS relies on visual signals that enter the eye directly. This is accomplished with 1,500 electrodes that are implanted underneath the patient's retina. Those electrodes in turn deliver a stream of data to a microprocessor that then sends the signals to the visual cortex in the brain. As with the Argus II current technology allows the patient to only see in black and white, but patients will be able to move their eyes to look about - recall that the Argus II requires patients to aim the system's video camera through turning their heads.

The following video provides an animation of how the Alpha IMS works.

To date, nine patients have had the Alpha IMS embedded, and of the nine the surgery was successful for eight patients. Unfortunately, the ninth patient's optic nerve head was touched during surgery, which resulted in the implant failing.

Keep in mind that 1,500 electrodes provide much more resolution than the 60 used by the Argus II. The Alpha IMS patients - who were for all practical purposes blind - say that they can now detect mouth shapes such as smiles, such things as whether or not a person is wearing glasses, everyday objects such as telephones.

The Alpha IMS system also allows patients to see farther out. For example, patients have been able to make out the horizon, houses, trees and rivers. One patient was reportedly able to identify automobiles at night by recognizing their headlights. Keep in mind that we are talking here about people who were previously unable to see anything at all.

An excellent albeit formal paper on the Alpha IMS is available for download as a PDF.

Magical Mechanical Fingertips

A new mechanical arm and hand has been designed and developed by Dr. Silvestro Micera at the Ecole Polytechnique Federale de Lausanne in Switzerland. We suggest it is magical but in fact it is simply another next step in wearable technology of the third kind - an artificial arm and hand that not only provides a working limb but also allows a patient to actually be able to feel rudimentary sensations from the hand and fingers.

The hand piece of it is shown below, and it works by tapping directly into the median and ulnar nerves in the arm, sending signals directly to the brain. But the device is in fact much more capable than that, in that communication is bi-directional - it allows signals from the brain to also reach and to some degree control some aspects of the mechanical arm and hand. What this means is that it can be controlled directly by the brain's motor signals rather than how it is otherwise now done, indirectly through muscles in the arm.

The more important breakthrough however is in allowing the brain to receive signals from the hand, which in turn manifests itself by providing the patient with functional sensations in the limb. Dr. Micera has been working on tactile mechanical capabilities for a number of years but this next generation of technology is a major step forward in terms of what patients are now able to feel and do.

As the image shows, the mechanical hand has sensory zones in the wrist, palm, and fingertips. The goal is to expand the hand/arm capabilities so that the fingertips can do such things as register pressure while simultaneously allowing the wrist to accomplish such things as sending signals indicating its position. The ability to actually sense position and grip pressure will be a significant next step beyond where we are today - which requires visual observation by the patient of what the patient is actually trying to accomplish.

Dr. Micera presented his work at the 2013 meeting of the American Association for the Advancement of Science, recently held in Boston. As Dr. Micera makes clear, the work is challenging. For example, establishing the actual the neural connection requires an implant. However, with current technology the implant can only be left in place for about a month at a time.

Dr. Micera estimates that further development and improvements to provide a fully functional limb with a longer timeframe for the implant to remain in place will take at least two years before it is ready for complete field testing. In the immediate future he and his team will collaborate with the Italian Ministry of Health to continue initial clinical trials.

Wearable technology is about many things, but perhaps the serious side of it within the medical profession is where the most profound end of it is to be found. We can of course see many opportunities to tie all manner of wearable tech into it. Perhaps Google Glass and new smart cloth tech will play a role here as well as new possibilities are integrated into more holistic wearable tech medical solutions. In any case, we are merely at the beginning of the adventure and we can now begin to anticipate exciting and rapid gains in how it all shapes up.

Edited by Braden Becker

Wearable Tech World Home

Internet Telephony Magazine

Click here to read latest issue

Internet Telephony Magazine

Click here to read latest issue CUSTOMER

CUSTOMER  Cloud Computing Magazine

Click here to read latest issue

Cloud Computing Magazine

Click here to read latest issue IoT EVOLUTION MAGAZINE

IoT EVOLUTION MAGAZINE