As described by Twitter (News - Alert) in a blog posting, “Spam on Twitter is different from traditional spam, primarily because of two aspects of our platform: Twitter exposes developer APIs to make it easy to interact with the platform and real-time content is fundamental to our user’s experience.” With this in mind, it is easy to see why it is not difficult for spammers to know just about everything there is to know about how Twitter uses anti-spam systems.

If you’ve used Twitter for any length of time, you have most likely come in contact with a large amount of spam. However, you may have noticed that you should be getting a lot less spam on Twitter lately. Today, Twitter gave us some insight as to why this is so. It seems that a recently developed system allows the social network to create anti-spam bot code almost immediately. This new system has been dubbed BotMaker.

Twitter described this new system as follows, “So, to fight spam on Twitter, we built BotMaker, a system that we designed and implemented from the ground up that forms a solid foundation for our principled defense against unsolicited content. The system handles billions of events every day in production and we have seen a 40 percent reduction in key spam metrics since launching BotMaker.”

This is reflected in the image below:

Image via Twitter (click to enlarge)

It seems that in using BotMaker, within a span of just a few seconds, engineers can set up rules that automatically take down and track spammers. In some instances, this takes place even before the spammers manage to post anything. In addition to barring known spam links, the bots can also flag suspicious behavior, for instance, if a lot of people block an account after it sends a tweet, it will be watched very closely. BotMaker will also look at long-term behavior, which means that if spammers do manage to slip through the cracks it does not mean that they are necessarily safe.

The main goal is obviously to eliminate, but effectively reduce the amount of spam that users see, which makes it a challenge. A few of the key principles that guided the design of BotMaker include:

- Prevent spam content from being created. By making it as hard as possible to create spam, we reduce the amount of spam the user sees

- Reduce the amount of time spam is visible on Twitter. For the spam content that does get through, we try to clean it up as soon as possible

- Reduce the reaction time to new spam attacks. Spam evolves constantly. Spammers respond to the system defenses and the cycle never stops. In order to be effective, we have to be able to collect data, and evaluate and deploy rules and models quickly

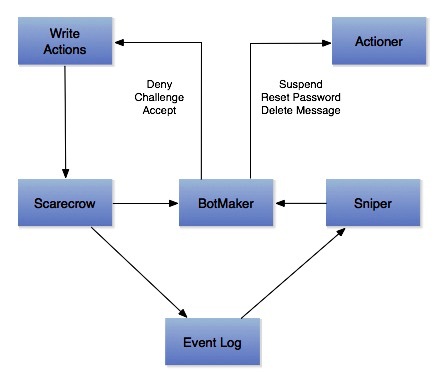

The description of how BotMaker works is that “BotMaker achieves these goals by receiving events from Twitter’s distributed systems, inspecting the data according to a set of rules, and then acting accordingly. BotMaker rules, or bots as they are known internally, are decomposed into two parts: conditions for deciding whether or not to act on an event, and actions that dictate what the caller should do with this particular event.”

Twitter has broken down the defense of spam into the following three ways that it is realized;

- Real time (Scarecrow): Scarecrow detects spam in real time and prevents spam content from getting into the system, and it must run with low latency. Being in the synchronous path of all actions enables Scarecrow to deny writes and to challenge suspicious actions with countermeasures like captchas, this is an acronym for "Completely Automated Public Turing test to tell Computers and Humans Apart

- Near real time (Sniper): For the spam that gets through Scarecrow’s real time checks, Sniper continuously classifies users and content off the write path. Some machine learning models cannot be evaluated in real time due to the nature of the features that they depend on. These models get evaluated in Sniper. Since Sniper is asynchronous, we can also afford to lookup features that have high latency.

- Periodic jobs: Models that have to look at user behavior over extended periods of time and extract features from massive amounts of data can be run periodically in offline jobs since latency is not a constraint. While we do use offline jobs for models that need data over a large time window, doing all spam detection by periodically running offline jobs is neither scalable nor effective.

You can see a visual of how this appears when BotMaker runs on the chart below:

Image via Twitter (click to enlarge)

Twitter is already seeing a return on its investment since spam has actually dropped by 40 percent. While you may notice that you are receiving less spam, what you should not notice is that BotMaker is working. It is designed to only fight certain forms of spam as they arrive, it saves more time consuming tasks for later. Whether or not you notice an impact on performance, it seems to be proving to be effective as the reduction in spam demonstrates.

View all articles

Internet Telephony Magazine

Click here to read latest issue

Internet Telephony Magazine

Click here to read latest issue CUSTOMER

CUSTOMER  Cloud Computing Magazine

Click here to read latest issue

Cloud Computing Magazine

Click here to read latest issue IoT EVOLUTION MAGAZINE

IoT EVOLUTION MAGAZINE